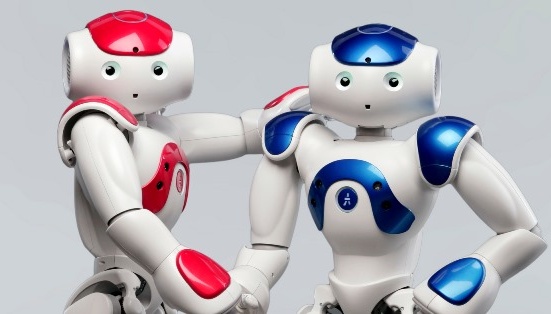

Nao (pronounced “now”) is a medium-sized humanoid autonomous robot, developed by Aldebaran Robotics.

Nao has four microphones fitted into his head and a voice recognition and analysis system. He recognises a set of predefined words that you can supplement with your own expressions. These words trigger any behaviour you choose. Available so far in English and French, we are working on adding six other languages (Dutch, German, Italian, Spanish, Mandarin and Korean). Nao is also capable of detecting the source of a sound or voice to deal with that source and start interacting.

Nao can express himself by reading out any file stored locally in his storage space or captured from a web site of RSS flow. Fitted with two speakers placed on either side of the head, his vocal synthesis system can be configured, allowing for voice alterations such as speed or tone. He is available in French and English and we are currently developing other languages for this vocal synthesis in the meantime. Naturally, you can send a music file to Nao and have him play it. He accepts .wav and .mp3 formats, which allows you to punctuate your behaviours with music or personalised sounds.

Nao sees by means of two CMOS 640 x 480 cameras, which can capture up to 30 images per second. The first is on the forehead, aimed at Nao’s horizon, while the second camera is placed at mouth level to scan the immediate environment. The software lets you recover photos that Nao sees and video streams. Yet what use are eyes, unless you can also perceive and interpret your surroundings? That’s why Nao contains a set of algorithms to detect and recognise faces and shapes, so he can recognise the person talking to him, find a ball, and ultimately much more complex objects. These algorithms have been specially developed, with constant care taken to use up minimum processor resources. Furthermore, Nao’s SDK lets you develop your own modules interfaced with OpenCV (the Open Source Computer Vision library initially developed by Intel). As you have the option to execute modules on Nao or transfer them to another PC connected to NAO, you can easily use the OpenCV display functions to develop and test your own algorithms with image feedback.

Nao is fitted with a capacitive sensor placed on the top of his head, divided into three sections. You can therefore give Nao information through touch: pressing once to tell him to turn off, for example, or using this sensor as a series of buttons to trigger an associated action. The system comes with LED, indicating the type of contact. It is also possible to program complex sequences. Nao can communicate in several ways. For local connections, infrared senders/receivers placed in his eyes allow him to connect to the objects in his environment, serving as a remote control. Yet Nao can also logon to your local network via Wi-Fi, making it easy to pilot and program him through a computer, or any other object that has a Wi-Fi connection. The Wi-Fi key is connected to the mother board and accepts a, b and g standards.

Besides local communication, Nao can browse the Internet, of course, and interface with any website to send or retrieve data. they can talk to each other and work together. You can choose to connect them directly in Wi-Fi, infrared or even body language. This really facilitates research possibilities on collaborative work between robots and means that several Nao can perform complex tasks such as geographic positioning or pooling analytical capacity.